How Can You Scale Sovereign AI with Kubeflow and OpenShift AI?

Here in this blog, we will learn how to scale sovereign AI with Kubeflow and OpenShift AI.

As artificial intelligence becomes a key driver of global competitiveness, the idea of sovereign AI—the ability to design, deploy, and operate AI systems independently—has gained significant importance. However, achieving this independence is not straightforward. A recent study involving more than 900 IT leaders and AI practitioners revealed a clear gap between ambition and execution: while 72% of organizations express a strong interest in AI, only 7% in the EMEA region are successfully realizing measurable outcomes.

The findings point to two major obstacles: data privacy concerns and fragmented infrastructure environments. These issues are slowing down AI progress, making sovereign AI less of a theoretical concept and more of a practical necessity. By addressing these challenges, sovereign AI enables organizations—especially those in regulated sectors—to transition from experimentation to production while maintaining:

- Compliance: Meeting strict regulations such as GDPR, the EU AI Act, and data residency requirements.

- Resilience: Ensuring operations continue even during geopolitical disruptions or internet isolation.

- Autonomy: Retaining full ownership of intellectual property, including models and training data, while avoiding vendor lock-in.

Platforms like Red Hat OpenShift AI provide the foundation for building secure, air-gapped AI environments where organizations maintain full control over their data, models, and outcomes.

Understanding the Challenge: A Real-World Scenario

Consider a hypothetical case inspired by real-world challenges. Dr. Aris, a Chief Data Officer in a European Ministry of Health, oversees vast datasets including anonymized patient records, genomic data, and epidemiological history. His goal is to develop a national-scale language model to assist clinicians in diagnosing rare diseases.

However, the organization faces a growing issue: researchers are informally using public AI tools to accelerate their work, unknowingly risking sensitive data exposure. To solve this, they need a secure internal platform that matches the usability of public cloud services.

Dr. Aris encounters two major roadblocks:

- Cloud dependency risks: Many AI service providers require data to be processed in foreign public clouds, potentially violating local regulations.

- Operational complexity: Building an in-house solution proves difficult, with inefficient GPU utilization and resource bottlenecks slowing progress.

To overcome these challenges, the team adopts a sovereign AI approach using OpenShift AI along with Kubeflow and Feast. This allows them to create a secure, self-contained “AI factory” within their own infrastructure. Training workflows become automated, feature data is consistently managed, and all operations remain within national boundaries.

The Three Foundations of Sovereign AI

To move toward true digital independence, organizations must control three critical layers of the AI ecosystem:

1. Technical Sovereignty

Organizations need flexibility and transparency in their infrastructure. By leveraging open, hardware-agnostic platforms, they can avoid dependency on a single vendor and adapt to supply chain changes. Open-source technologies also allow full visibility into system operations, ensuring auditability and long-term control.

2. Data Sovereignty

Sensitive data must remain within defined geographic and legal boundaries. At the same time, data scientists should have seamless access to that data without compromising security. Achieving this balance is essential for both innovation and compliance.

3. Operational Sovereignty

The control layer—responsible for managing workloads, access, and resources—must operate locally. Relying on external SaaS-based control systems introduces risks, making it essential to maintain full control within the organization’s own environment.

A Practical Architecture for Sovereign AI

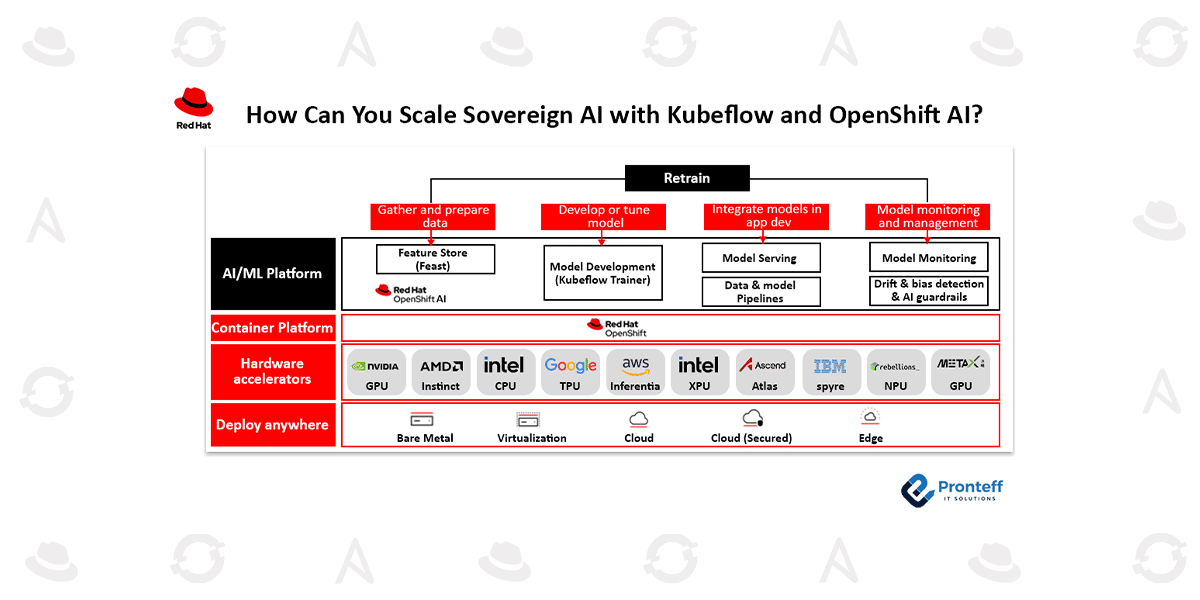

A robust sovereign AI solution is built on a layered architecture that integrates open-source technologies. Red Hat AI acts as the central platform, combining Kubernetes-based infrastructure with tools like Kubeflow for training and Feast for data management.

This approach ensures:

- Full ownership of the AI stack

- Transparency through open-source components

- Flexibility to adapt and scale

Key Components of the Solution

High-Performance Training with Kubeflow

Kubeflow simplifies distributed model training on private infrastructure. It allows data scientists to run large-scale AI workloads without worrying about underlying hardware complexity.

- Ease of use: A unified interface simplifies distributed training

- Reliability: Jobs are managed efficiently, minimizing resource waste

- Efficiency: GPU resources are allocated dynamically for optimal utilization

Data Management with Feast

Feast acts as the bridge between raw data and machine learning models, ensuring consistency and traceability.

- Maintains accurate historical data for training

- Supports both batch (offline) and real-time (online) data access

- Provides a centralized feature registry for consistency across teams

End-to-End Sovereignty: From Training to Deployment

Sovereign AI must cover the entire lifecycle—not just model development. Once models are trained, they should be deployed and operated within the same secure environment.

With integrated model serving capabilities, organizations can:

- Process real-time data locally without relying on external APIs

- Optimize infrastructure usage for large AI models

- Maintain complete control over inference and monitoring

This creates a seamless pipeline: data collection → model training → deployment, all within a controlled and secure ecosystem.

Final Thoughts

Building sovereign AI requires more than local infrastructure—it demands a well-designed architecture that prioritizes security, scalability, and governance.

By combining platforms like OpenShift AI with tools such as Kubeflow and Feast, organizations can establish AI systems that are:

- Secure by design: Data never leaves the protected environment

- Scalable: Distributed computing enables efficient growth

- Auditable: Clear data lineage ensures compliance and reproducibility

Ultimately, sovereign AI empowers organizations to innovate confidently while maintaining full control over their data and technology—turning independence into a strategic advantage.